Hi Fons, Hi Kevin,

this sounds very nice!

I've only read your email+presentation and skimmed your paper, and I have the following rather technical question:

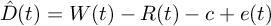

Your DLL depends on you having a delay estimator  , in which

, in which  are the read-from-buffer,

are the read-from-buffer,  the

written-to-buffer samples,

the

written-to-buffer samples,  is the

(ideally) constant delay and

is the

(ideally) constant delay and  is

fluctuation and measurement error. So: In your paper, you rely on

the timestamps jack give you to. Now, these microsecond timestamps

will introduce a third clock into our problems. I can see

how the control loop converges in case of that clock being both

faster than your sampling clock and relatively well-behaved, but:

is this an assumption we can generally make?

is

fluctuation and measurement error. So: In your paper, you rely on

the timestamps jack give you to. Now, these microsecond timestamps

will introduce a third clock into our problems. I can see

how the control loop converges in case of that clock being both

faster than your sampling clock and relatively well-behaved, but:

is this an assumption we can generally make?

Let's first just focus on the Audio part (I personally think

matching a 100MS/s  2ppm

stream to a whatever 31.42MS/s

2ppm

stream to a whatever 31.42MS/s  20ppb stream with a clock that has microsecond resolution and

more ppms is out of question):

20ppb stream with a clock that has microsecond resolution and

more ppms is out of question):

You say:

> On the Jack side, part of the solution is already available in the server. The DLL (Delay Locked Loop) that has been part of Jack since many years computes in each cycle a prediction of the start time of the next cycle, while removing most of the jitter due to random wakeup latency. This provides a smooth and continuous mapping between time (as measured by Jack’s microsecond timer) and frame counts.

Hm, OK. So you get a  time

estimate. Wow! Third loop of control! Do you have any ressources

on that? How is that cycle start time prediction (which is a

sampling rate estimator, inherently) realized? I think the "a

practical approach to estimating sample rate based on two (or

more) conflicting clocks on a general-purpose PC with a

large-buffered processing architecture)" is probably a very

interesting read, though, compared to Fons' [3]

time

estimate. Wow! Third loop of control! Do you have any ressources

on that? How is that cycle start time prediction (which is a

sampling rate estimator, inherently) realized? I think the "a

practical approach to estimating sample rate based on two (or

more) conflicting clocks on a general-purpose PC with a

large-buffered processing architecture)" is probably a very

interesting read, though, compared to Fons' [3]

So, here's my problem with the block that Kevin would like to see:

I think it'll be a little unlikely to implement this as a block

that you drop in somewhere in your flow graph. If it works, it has

to be done directly inside the audio sink. The reason simply is

that unlike audio architectures, and especially the low-latency

Jack arch, GNU Radio doesn't depend on fixed sample packet sizes,

and as an effect of that, you're very likely to see very jumpy

throughput scenarios. Ok, imagine a simple SDR Device --> math

--> Null Sink flow graph. At every inter-block buffer, the average

throughput will be constant (i.e. SDR sampling rate), which means

the ratio of sample chunk size an delay will be constant, but the

chunk sizes will either vary a lot, or converge against either the

size of "largest granularity" block in the signal chain (e.g. a

1024 FFT), or simply "half a buffer", if there is a computational

bottleneck, but if they do the latter, you'll likely see buffers

overflow at some point.

With a wildly varying sample chunk size, the granularity and

precision of  becomes very important – sure, if I can integrate over a large

number of observed chunks of large size, I can easily find the

mean rate, but if I have very short delays (where the timing error

is relatively big compared to

becomes very important – sure, if I can integrate over a large

number of observed chunks of large size, I can easily find the

mean rate, but if I have very short delays (where the timing error

is relatively big compared to  ), I'll need to either up

the latency to be able to only observe large-scale events, or I'm

going to introduce loads of jitter on the output.

), I'll need to either up

the latency to be able to only observe large-scale events, or I'm

going to introduce loads of jitter on the output.

The problem gets even worse if the output buffer of the

rate-correction block isn't directly coupled to the consuming

(audio) clock – if there's nondeterministic error introduced at

the  estimation, the control loop Fons showed is likely to break down

at some point.

estimation, the control loop Fons showed is likely to break down

at some point.

So in this case, the throughput-optimizing architecture of GNU Radio is in conflict with the wish for good delay estimator :( Now, I know that for things like disciplining a 10MHz analog oscillator with a GPS-supplied timing, relatively complex control loops are used – I have no idea whatsoever whether they are suitable for controlling things in a general-purpose PC. I hope Fons can shine a bit of light on how Jack handles the timing estimation!

In practice, the "best" clock in most GNU Radio flow graphs attached to an SDR receiver is the clock of the SDR receiver (RTL dongles notwithstanding); if we had a way of measuring other clocks, especially CPU time and audio time, using the sample rate coming out of these devices, that'd be rather handy for all kinds of open-loop resampling (open-loop in the sense that we hope that based on our frequency offset estimate our resampling is correct enough, and don't actually use e.g. buffer fillage).

Best regards,

Marcus

[1] http://kokkinizita.linuxaudio.org/papers/adapt-resamp-pres.pdf

[2] Adriaensen, Fons: "Controlling adaptive resampling", 2012, http://kokkinizita.linuxaudio.org/papers/adapt-resamp.pdf

[3] Adriaensen, Fons: "Using a DLL to filter time", 2005,

http://kokkinizita.linuxaudio.org/papers/usingdll.pdf

On 26.10.2016 00:26, Kevin Reid wrote:

On Tue, Oct 25, 2016 at 2:21 PM, Fons Adriaensen <address@hidden> wrote:

Between the two incoherent domains there is a buffer and a resampler. The resampler is adjusted so that the average number of samples 'in the pipeline' is constant. The problem is finding a reliable and noise-free estimate of that number. At both ends we don't have a clean continuous stream, but samples are written and read in blocks, and the timing of those write and read operations can be irregular. The solution is to have a delay-locked-loop (DLL) at either side to remove measured timing jitter. This provides a continuous mapping of time to the number of samples written and read. The difference between the two is average number of samples in the buffer, which is compared to a target value. The filtered difference then controls the resampling ratio.

For what it's worth, if there were a GNU Radio block which implements this algorithm it would be useful to me.

I develop ShinySDR which is a multipurpose receiver application which can use an arbitrary number of RF sources and software demodulators, and combine the audio outputs where applicable. That in itself presents a clock domain problem, but there are also a variety of other problematic cases (related to live reconfiguration and squelch) which could be addressed with the same solution.

_______________________________________________ Discuss-gnuradio mailing list address@hidden https://lists.gnu.org/mailman/listinfo/discuss-gnuradio